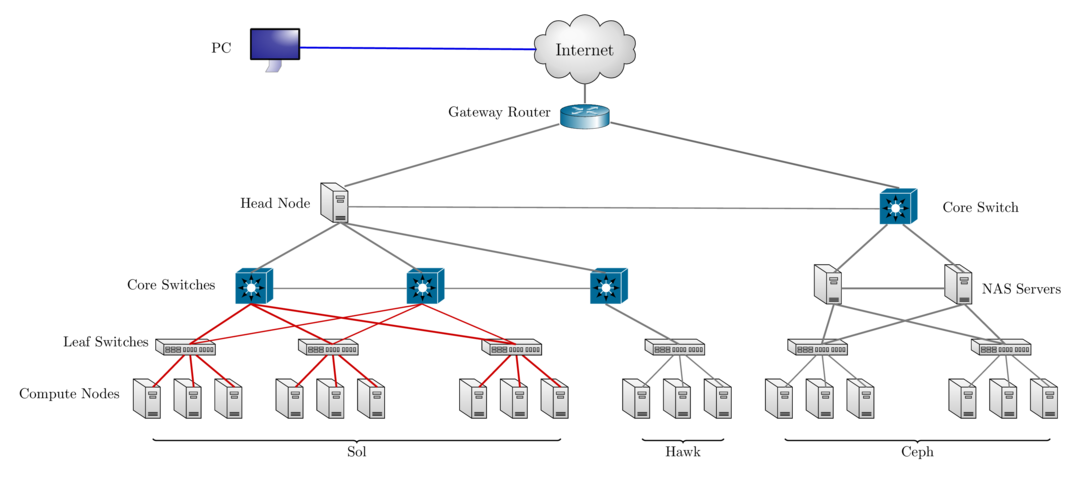

class: center, middle, inverse, title-slide # 2021 HPC Programming Workshop ### Research Computing ### <a href="https://researchcomputing.lehigh.edu" class="uri">https://researchcomputing.lehigh.edu</a> --- class: myback # About Us? * Who? * Unit of Lehigh's Library & Technology Services within the Center for Innovation in Teaching & Learning. * Our Mission - We enable Lehigh Faculty, Researchers and Scholars achieve their goals by providing various computational resources; hardware, software, and storage; consulting and training. * Research Computing Staff * __Alex Pacheco, Manager & XSEDE Campus Champion__ * Steve Anthony, System Administrator * Dan Brashler, CAS Computing Consultant * _Sachin Joshi, Data Analyst & Visualization Specialist_ --- # Workshop Schedule | Day | Session | Instructor | |:---:|:----:|:----:| | June 28 | Fortran | Alex Pacheco | | June 29 | C | Sachin Joshi | | June 30 | Debugging & Profiling | Alex Pacheco | * 9AM - 4PM on all days with a break for lunch between Noon and 1PM * Afternoon of June 30 is free time for * programming related discussion and Q&A, * completing exercises, * help session/consultation with programming in your research * Upcoming Workshop * Parallel Programming Workshop on July 13-15 covering OpenMP, OpenACC and MPI * [Registration](https://lehigh.co1.qualtrics.com/jfe/form/SV_6LjCFTLfSUay2mq) * Computing time for Workshop provided by [NSF Campus Cyberinfrastructure award 2019035](https://www.nsf.gov/awardsearch/showAward?AWD_ID=2019035&HistoricalAwards=false). --- # Sol: Lehigh's Shared HPC Cluster - built by investments from Provost<sup>a</sup> and Faculty. <table> <thead> <tr> <th style="text-align:right;"> Nodes </th> <th style="text-align:left;"> Intel Xeon CPU Type </th> <th style="text-align:left;"> CPU Speed (GHz) </th> <th style="text-align:right;"> CPUs </th> <th style="text-align:right;"> GPUs </th> <th style="text-align:right;"> CPU Memory (GB) </th> <th style="text-align:right;"> GPU Memory (GB) </th> <th style="text-align:right;"> CPU TFLOPS </th> <th style="text-align:right;"> GPU TFLOPs </th> <th style="text-align:right;"> SUs </th> </tr> </thead> <tbody> <tr> <td style="text-align:right;"> 9 </td> <td style="text-align:left;"> E5-2650 v3 </td> <td style="text-align:left;"> 2.3 </td> <td style="text-align:right;"> 180 </td> <td style="text-align:right;"> 10 </td> <td style="text-align:right;"> 1024 </td> <td style="text-align:right;"> 80 </td> <td style="text-align:right;"> 5.7600 </td> <td style="text-align:right;"> 2.570 </td> <td style="text-align:right;"> 1576800 </td> </tr> <tr> <td style="text-align:right;"> 33 </td> <td style="text-align:left;"> E5-2670 v3 </td> <td style="text-align:left;"> 2.3 </td> <td style="text-align:right;"> 792 </td> <td style="text-align:right;"> 62 </td> <td style="text-align:right;"> 4224 </td> <td style="text-align:right;"> 496 </td> <td style="text-align:right;"> 25.3440 </td> <td style="text-align:right;"> 15.934 </td> <td style="text-align:right;"> 6937920 </td> </tr> <tr> <td style="text-align:right;"> 14 </td> <td style="text-align:left;"> E5-2650 v4 </td> <td style="text-align:left;"> 2.2 </td> <td style="text-align:right;"> 336 </td> <td style="text-align:right;"> 0 </td> <td style="text-align:right;"> 896 </td> <td style="text-align:right;"> 0 </td> <td style="text-align:right;"> 9.6768 </td> <td style="text-align:right;"> 0.000 </td> <td style="text-align:right;"> 2943360 </td> </tr> <tr> <td style="text-align:right;"> 1 </td> <td style="text-align:left;"> E5-2640 v3 </td> <td style="text-align:left;"> 2.6 </td> <td style="text-align:right;"> 16 </td> <td style="text-align:right;"> 0 </td> <td style="text-align:right;"> 512 </td> <td style="text-align:right;"> 0 </td> <td style="text-align:right;"> 0.5632 </td> <td style="text-align:right;"> 0.000 </td> <td style="text-align:right;"> 140160 </td> </tr> <tr> <td style="text-align:right;"> 24 </td> <td style="text-align:left;"> Gold 6140 </td> <td style="text-align:left;"> 2.3 </td> <td style="text-align:right;"> 864 </td> <td style="text-align:right;"> 48 </td> <td style="text-align:right;"> 4608 </td> <td style="text-align:right;"> 528 </td> <td style="text-align:right;"> 41.4720 </td> <td style="text-align:right;"> 18.392 </td> <td style="text-align:right;"> 7568640 </td> </tr> <tr> <td style="text-align:right;"> 6 </td> <td style="text-align:left;"> Gold 6240 </td> <td style="text-align:left;"> 2.6 </td> <td style="text-align:right;"> 216 </td> <td style="text-align:right;"> 0 </td> <td style="text-align:right;"> 1152 </td> <td style="text-align:right;"> 0 </td> <td style="text-align:right;"> 10.3680 </td> <td style="text-align:right;"> 0.000 </td> <td style="text-align:right;"> 1892160 </td> </tr> <tr> <td style="text-align:right;"> 2 </td> <td style="text-align:left;"> Gold 6230R </td> <td style="text-align:left;"> 2.1 </td> <td style="text-align:right;"> 104 </td> <td style="text-align:right;"> 0 </td> <td style="text-align:right;"> 768 </td> <td style="text-align:right;"> 0 </td> <td style="text-align:right;"> 4.3264 </td> <td style="text-align:right;"> 0.000 </td> <td style="text-align:right;"> 911040 </td> </tr> <tr> <td style="text-align:right;"> 89 </td> <td style="text-align:left;"> </td> <td style="text-align:left;"> </td> <td style="text-align:right;"> 2508 </td> <td style="text-align:right;"> 120 </td> <td style="text-align:right;"> 13184 </td> <td style="text-align:right;"> 1104 </td> <td style="text-align:right;"> 97.5104 </td> <td style="text-align:right;"> 36.896 </td> <td style="text-align:right;"> 21970080 </td> </tr> </tbody> </table> <!-- 87 nodes interconnected by 2:1 oversubscribed Infiniband EDR (100Gb/s) fabric. Only 1.40M SUs from Provost investment available to Lehigh researchers. --> .footnote[ a: 8 Intel Xeon E5-2650 v3 nodes invested by Provost in 2016. ] --- # Hawk * Funded by [NSF Campus Cyberinfrastructure award 2019035](https://www.nsf.gov/awardsearch/showAward?AWD_ID=2019035&HistoricalAwards=false). - PI: __Ed Webb__ (MEM). - co-PIs: Balasubramanian (MEM), Fredin (Chemistry), Pacheco (LTS), and __Rangarajan__ (ChemE). - Sr. Personnel: Anthony (LTS), Reed (Physics), Rickman (MSE), and __Takáč__ (ISE). <table> <thead> <tr> <th style="text-align:right;"> Nodes </th> <th style="text-align:left;"> Intel Xeon CPU Type </th> <th style="text-align:left;"> CPU Speed (GHz) </th> <th style="text-align:right;"> CPUs </th> <th style="text-align:right;"> GPUs </th> <th style="text-align:right;"> CPU Memory (GB) </th> <th style="text-align:right;"> GPU Memory (GB) </th> <th style="text-align:right;"> CPU TFLOPS </th> <th style="text-align:right;"> GPU TFLOPs </th> <th style="text-align:right;"> SUs </th> </tr> </thead> <tbody> <tr> <td style="text-align:right;"> 26 </td> <td style="text-align:left;"> Gold 6230R </td> <td style="text-align:left;"> 2.1 </td> <td style="text-align:right;"> 1352 </td> <td style="text-align:right;"> 0 </td> <td style="text-align:right;"> 9984 </td> <td style="text-align:right;"> 0 </td> <td style="text-align:right;"> 56.2432 </td> <td style="text-align:right;"> 0.00000 </td> <td style="text-align:right;"> 11843520 </td> </tr> <tr> <td style="text-align:right;"> 4 </td> <td style="text-align:left;"> Gold 6230R </td> <td style="text-align:left;"> 2.1 </td> <td style="text-align:right;"> 208 </td> <td style="text-align:right;"> 0 </td> <td style="text-align:right;"> 6144 </td> <td style="text-align:right;"> 0 </td> <td style="text-align:right;"> 8.6528 </td> <td style="text-align:right;"> 0.00000 </td> <td style="text-align:right;"> 1822080 </td> </tr> <tr> <td style="text-align:right;"> 4 </td> <td style="text-align:left;"> Gold 5220R </td> <td style="text-align:left;"> 2.2 </td> <td style="text-align:right;"> 192 </td> <td style="text-align:right;"> 32 </td> <td style="text-align:right;"> 768 </td> <td style="text-align:right;"> 512 </td> <td style="text-align:right;"> 4.3008 </td> <td style="text-align:right;"> 8.10816 </td> <td style="text-align:right;"> 1681920 </td> </tr> <tr> <td style="text-align:right;"> 34 </td> <td style="text-align:left;"> </td> <td style="text-align:left;"> </td> <td style="text-align:right;"> 1752 </td> <td style="text-align:right;"> 32 </td> <td style="text-align:right;"> 16896 </td> <td style="text-align:right;"> 512 </td> <td style="text-align:right;"> 69.1968 </td> <td style="text-align:right;"> 8.10816 </td> <td style="text-align:right;"> 15347520 </td> </tr> </tbody> </table> * 798TB (raw) Ceph based storage * Production: **Feb 1, 2021**. --- # Network Layout Sol, Hawk & Ceph  --- # Accessing Sol & Hawk * Sol: accessible using ssh while on Lehigh's network ```bash ssh username@sol.cc.lehigh.edu ``` * Windows PC require a SSH client such as [MobaXterm](https://mobaxterm.mobatek.net/) or [Putty](https://putty.org/). * Mac and Linux PC's, ssh is built in to the terminal application. * If you are not on Lehigh's network, login to the ssh gateway ```bash ssh username@ssh.cc.lehigh.edu ``` and then login to sol as above - Alternatively, ```bash ssh -J username@ssh.cc.lehigh.edu username@sol.cc.lehigh.edu ``` - [Click here](https://confluence.cc.lehigh.edu/x/JhH5Bg) to learn how to configure MobaXterm to use the SSH Gateway. --- #  * an NSF-funded open-source HPC portal based on Ohio Supercomputing Center’s original OnDemand portal. * Goals: provide an easy way for system administrators to provide web access to their HPC resources, including, but not limited to: - Plugin-free web experience - Easy file management - Command-line shell access - Job management and monitoring across different batch servers and resource managers - Graphical desktop environments and desktop applications --- # Connecting to the HPC Portal * https://hpcportal.cc.lehigh.edu: Available on campus or VPN. * Chrome or Firefox preferred * At least one user has reported problems with Safari <span class="center">  </span> ------ # Dashboard <span class="center">  </span> --- # Shell Access * Click Clusters > Sol Shell Access <span class="center">  </span> --- # Shell Access * Click Clusters > Sol Shell Access <span class="center">  </span> --- # File Management .pull-left[ * Launch File Explorer * Navigate Storage * Transfer Files to/from Sol * Create, Edit, Delete, Rename Files & Directories <span class="center">  </span> ] .pull-right[ <span class="center">   </span> ] --- # HPC Workshop * If you are a HPC user, login to the cluster as you normally do * Others, login to the HPC Portal * start an interactive session on the workshop partition * connect to the terminal using the Shell Access tab and request an interactive session * `srun -p workshop -A hpc2021_prog_083121 -n 1 -t 60 --pty /bin/bash --login` * select Terminal from "Interactive Apps" * enter hpc2021_prog_083121 for accounts * select 1 cpu * wall clock time of 1 (for 1 hour) * select workshop --- # Terminal App for HPC Workshop <span class="center">  </span> --- # Terminal App for HPC Workshop <span class="center">  </span> --- # Terminal App for HPC Workshop * Copy Fortran and Debugging demo and exercise files <span class="center">  </span>